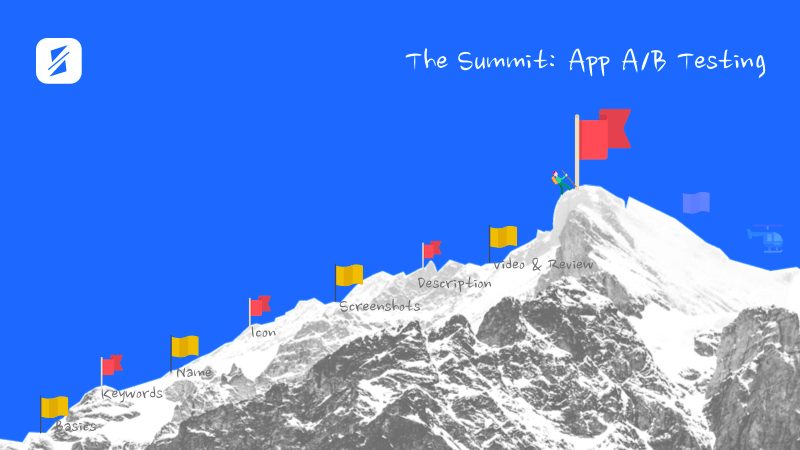

The Summit: App A/B Testing

Episode #8 of the course App Store Optimization (ASO) fundamentals by SplitMetrics Academy

Congratulations! By this point, you’ve learned how (and why) to optimize seven app page elements: keywords, name, description, icons, screenshots, video previews, and ratings and reviews.

We asked you not to implement any changes and ideas you’ve come up with yet. The reason is that your assumptions may be wrong. You never know how potential users will react to a new icon or set of screenshots.

You need a risk-free way to see how new elements perform. That’s why you use split-testing.

Stop guessing and start testing

What name makes your product get noticed? What CTA gets taps? What screenshots drive App Store installs?

In essence, A/B testing is a method of comparing two options and choosing the one with the best results:

You split an audience into equal groups and plant them on different variants of one element. Each group represents the whole audience and behaves like an average user would. As a result, you clearly see whether a user leans toward the first or second option, and can apply the winner to improve the overall element performance.

App A/B testing is used to increase a conversion rate, evaluate a product, validate product positioning, and see how different audience segments behave.

Developing an app A/B testing strategy

While potential users make decisions on getting an app throughout their journey, there are three key nodes where people bounce:

1. Finding: Search and Search Ads.

Here, focus on Keywords, Name, Icon, Screenshots, and Video Preview.

2. Comparing with competitors: Category.

Here, focus on Name and Icon.

3. Deciding on an install: App Store Page.

Here, focus on Icon, Screenshots, Description, Video Preview, and Rating.

It is crucial to determine the bottleneck of your user acquisitions funnel. App Store Analytics will give you initial insights on steps that potential users do not pass.

Therefore, you can think about A/B tests not as about elements, but steps optimization.

Testing strategies will vary depending on how people find your app, and App A/B testing workflow will go like this:

1. Research and brainstorming.

Every successful A/B experiment starts with a mature hypothesis and research. On this step, you put down what you want to test and what your final goals are.

2. Determine your variants.

Once you have formulated your hypothesis and identified the elements you’ll be testing, you create variations.

3. Run the test.

On this step, you identify and drive your audience to two variations of one page.

4. Analyze data and review results.

The coolest part: you determine the winner! Here, you look at how people interact with different pages and ultimately, whether they press “Install.”

5. Apply the winner.

If you do have a clear winner, you can either implement changes or use it as a starting point for following tests.

6. Run follow-up tests.

Conversion optimization is an ongoing process and one test can hardly change anything. Continue running tests even if you’re already happy with the results. There’s always room for improvements.

Running an app A/B experiment

App A/B testing is always challenging. Luckily, there is a range of app A/B testing tools that can help you launch experiments. SplitMetrics is one of the best ones out there that allows you to run experiments for live iOS and Android apps, as well as for ones that haven’t been released yet.

If you have an Android app, consider Google Play Experiment. Facebook Ads is another tool to test different variations of screenshots and get a clue on how they will perform.

Quick task: Define a step you want to optimize (finding, comparing with competitors, deciding on install, etc.) and elements that can influence conversion rates. Choose one element—the icon and screenshots are the most exciting—and develop two to three variations for it.

Run an A/B experiment with SplitMetrics, Google Play Experiment, or ask users for an opinion on which variation is better on social media. In the next letter, we will talk about how to analyze results and implement changes.

Recommended book

A / B Testing: The Most Powerful Way to Turn Clicks into Customers by Dan Siroker

Share with friends