How Do We See?

Episode #6 of the course Understanding your brain by Betsy Herbert

Hello again!

Yesterday, we began an exploration of the five senses, starting with touch. Today, we’ll be looking at vision—which, as it turns out, is fantastically more complicated than one might expect. It’s tempting to think of vision as a “video camera” that simply collects light and projects it onto a screen at the back of our brains, but in fact, this couldn’t be further from the truth. Let’s dive right in.

Anatomy of the Eye

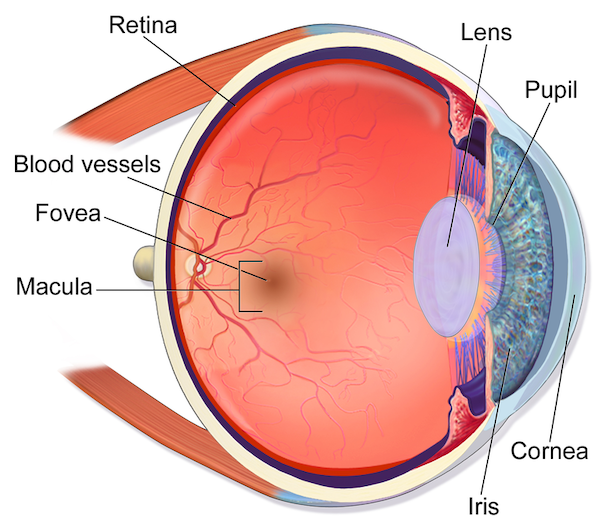

We all know what an eye looks like from the outside. But what’s on the inside? After light enters through the pupil, it hits a sheet of cells called the retina on the opposite side. Some of these cells are photoreceptors, which are sensitive to light. They’re densely packed in a central point known as the fovea, where the resolution of our sight is highest. You can see this if you try concentrating on just the “g” in “sight”; everything around it becomes much blurrier.

Eye anatomy.

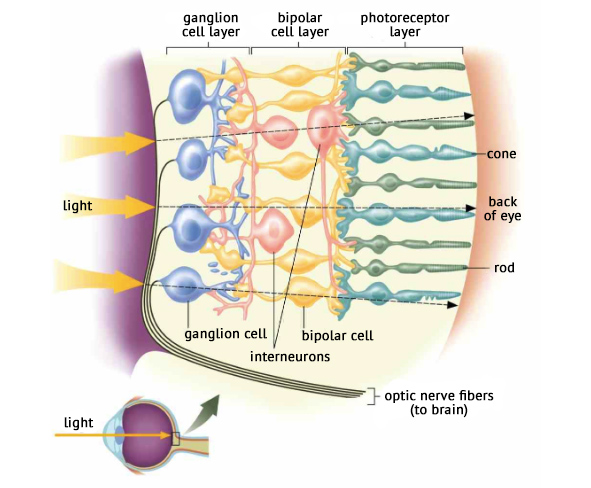

Now, you may have heard that there are two types of photoreceptors: rods and cones. The cones function in bright daylight and are responsible for our color vision, detecting the different proportions of red, green, and blue light that make up any color that it’s possible to see. The rods mainly function in dim light and only detect light intensity, which is why when it’s very dark, we can only see in black and white.

Structure of the retina.

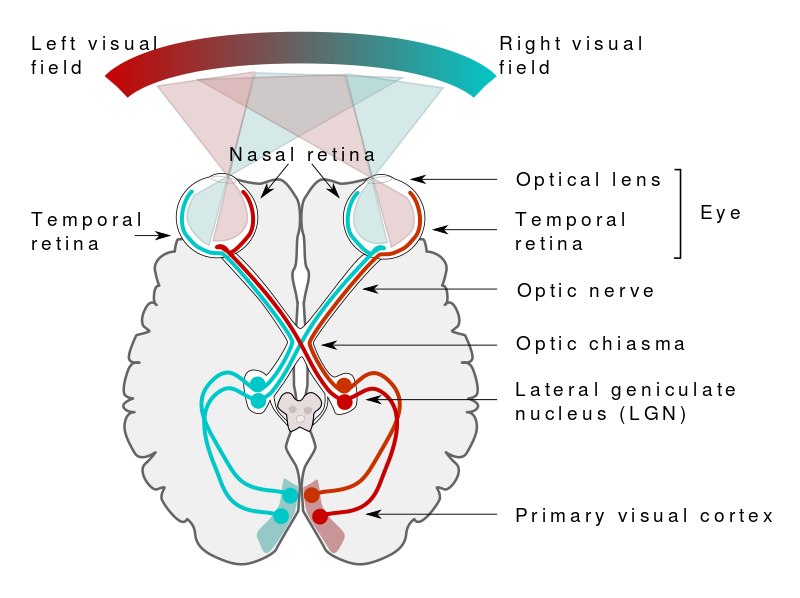

These photoreceptors are simply another type of sensory neuron. When light falls on them, little electrical potentials are generated, which in turn, activate bipolar cells and then ganglion cells, whose axons collect together to form the optic nerve. The signal is then sent primarily to the visual cortex in the optic lobe, at the very back of the brain. Do you remember it from Lesson 1? Rather counterintuitively, that’s where your internal representation of the world is constructed—at the furthest point from the eyes!

Human visual pathway.

Visual Processing

But what happens once the signal reaches the visual cortex? How do we become aware of what we’re seeing?

The visual cortex is divided into many layers. The signal is sent through these layers in stages, and a different aspect of what you’re seeing is parsed at each stage. The neurons in the first layer, for example, respond best to edges of light falling in a certain orientation on the retina, so at this stage, the lines and angles of the object are extracted. At higher levels, other elements such as shape, color, movement, and object category are determined. It’s amazing to think that the conscious perception and understanding of what we’re looking at—for example, “laptop screen”—is actually the very final stage of a huge amount of unconscious hierarchical processing that labors away as soon as we open our eyes.

And that’s not all! While it’s gradually constructing the world from basic elements of light, the brain also edits and fine-tunes the raw input. For example, whenever we move our eyes, the signals are suppressed so we don’t get dizzy from the motion blur (we actually go blind for a fraction of a second!). The brain must then “stitch together” these static images of the world into a smoothly-flowing stream. There’s also the question of attention: Given a finite processing capacity, what features of the environment must we focus on, and which can we ignore? The brain selectively brings some features to saliency and edits out the rest, leading to fascinating phenomena like change blindness and inattentional blindness.

As you can see, visual processing is a hugely complex and intriguing area that is only just beginning to be understood. We’ve had a quick whistle-stop tour today, but we’ve only scratched the surface. If you’re interested, I’d strongly encourage you to read further and see what else you can discover.

Up next: hearing, smell, and taste! See you tomorrow!

Betsy

Recommended video

Share with friends