Arrays

Episode #4 of the course Kurt Anderson by Computer science fundamentals

Welcome back!

Now that we have a good understanding of time analysis, let’s take a look at our first data structure, the array.

Data structures are simply just different ways to store data. Remember, computer science is all about manipulating data in some way, shape, or form. Data structures are the tools with which we can do this.

What Are Arrays?

Fixed arrays are the most basic version of an array. These arrays are created with a fixed size and stick to that size permanently. This means there is no way to increase or decrease the size of the array. (More advanced arrays can adjust size at a time penalty, but for the purpose of this lesson, we’ll assume they can’t).

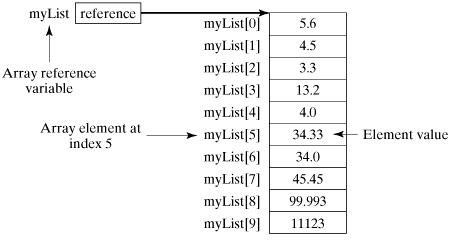

Here is a diagram that shows how an array works:

An array allocates a section of memory for storage with a specific name. In code, we can give it any name we like. In the above situation, we use “myList” as the name for this array.

We can then add a number in [] to call a specific point in that array. Note, computer science is 0 based. So, arrays always start at 0, not 1, meaning that if you called myList[1], you would actually be grabbing the second element in the array. (This is commonly forgotten among computer scientists and is referred to as an “off by one error”).

If we call myList[0], we will get the element at the 0 spot, which in our case, is 5.6. These calls are constant time (O(1)), meaning no searching has to be done. We call myList[5] and it will return in the same amount of time as myList[1]. As you’ll see later, this is not the case for other data structures.

Pros and Cons?

Arrays are great data structures for holding a locked amount of data. They have constant time insertion, removal, and access.

They are size limited, however. If you need more data, you will be out of luck. Along with this, they can be memory intensive. If you have an array that is 50,000 slots big but only has 2,000 pieces of data, 48,000 slots will go to waste. as they can’t be used by other programs.

Array Run Times

Insertion: O(1) – It’s easy to insert randomly anywhere in the array. All you need is the index number and you can insert here in O(1) time.

Deletion: O(1) – Deleting randomly from the array is just like inserting randomly.

Search unsorted: O(n) – If the array is unsorted, there is no way of knowing where the element is going to be. So at worst case, it’s going to be a search of the entire array to find the element. (Is it in element 0? Nope, then let’s check element 1; nope. Element 2, nope, etc. until found).

Search sorted: O(logn) – This is explained below.

O(logn)

Logn is important to computer science. In mathematics, it is the inverse (opposite) of an exponential function. This means as time goes on, it will increase less and less, until it nearly becomes a horizontal line.

This time can happen if we try to search a sorted array. We keep cutting the search area in half to find our number. Take this array, for example: [1,3,4,5,9,19,20,21,26,64,74]. Say we want to search the array to see if 64 is in it. What we do is start in the middle, see if the number is <, =, or >. If greater, we go to the middle of the right half; if less, the middle of the left half.

Step 1. Start at the middle. We take the size, 11, and divide by 2, rounding down: 11/2 = 5. We then take the element at this location (remember, the first element is 0, not 1), so 19. 64 is greater than 19, so we do the same in the right half.

Step 2. Halfway between index 5 and 11 is 8. So, we take the element there, which is 26.

Step 3. 64 is greater than 26, so we take the middle of the right half, which is 9. The element at location 9 is 64. We have found our match.

Since we are cutting each piece in half, we get O(logn).

Key takeaways:

• Arrays are a continuous section of memory.

• Accessing, storing, and deleting from an array is constant time.

• Arrays are typically locked in size, meaning you need to know the amount of data coming in before creating the array.

• Arrays can take up a lot of unused space if not filled up.

Tomorrow, we will look at a data structure that can expand indefinitely.

Cheers,

Kurt

Recommended resources

Java—Arrays: Learn more about how arrays work in the popular Java.

JavaScript Arrays: Learn more about how to arrays work in the popular web language, Javascript.

Share with friends